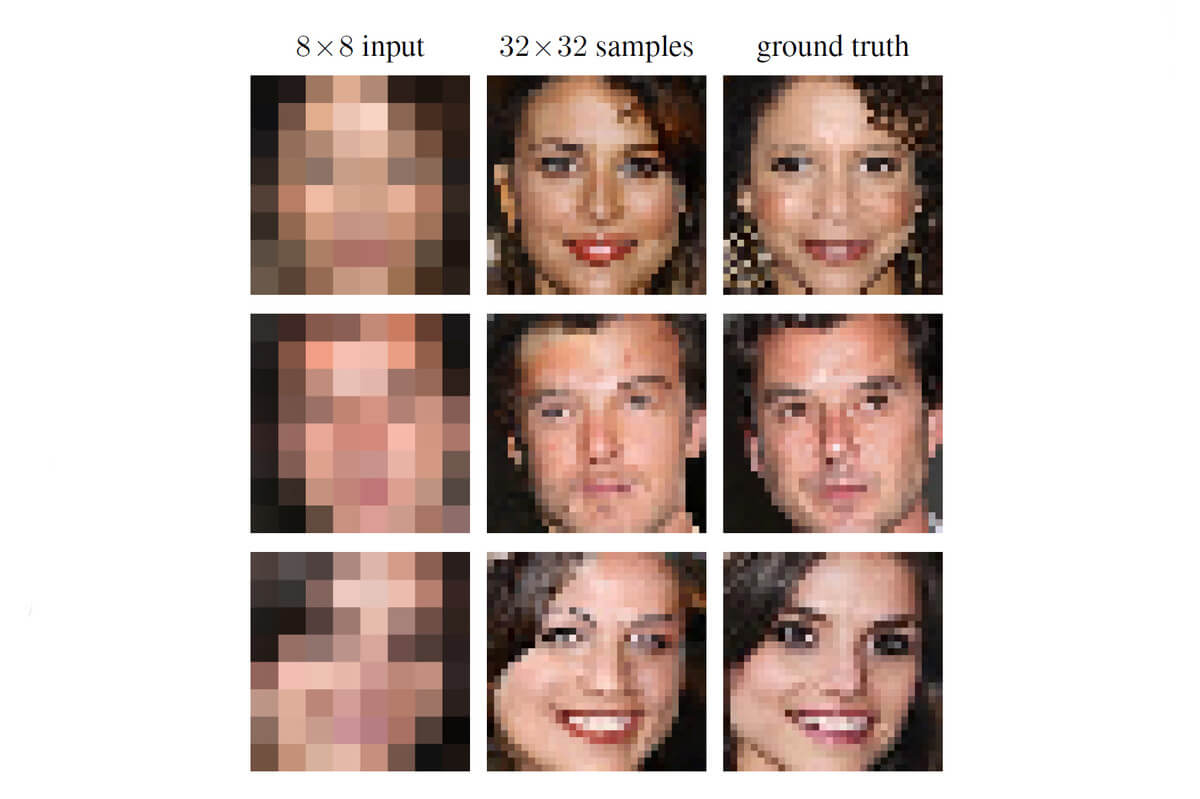

Do you know all those crime shows where detectives spot a suspect in a million by enhancing his tiny face? Then they cross reference it with profiles in the database? These shows are about to become reality (no, the tech used there didn’t exist until recently). The team at Google Brain figured out how to use machine learning to reveal a tiny portrait #machinemagic

By using a pair of neural networks, Google Brain approximated the way a person looks from a 8 x 8 -pixel image. The first neural network – the conditioning network – mapped the pixels from the tiny image to bring them to a higher resolution picture. Then, the second one, called prior network, took the undecipherable image and added details from similar pixel maps to build a face. At the end, a single image emerges.

The “zoom and enhance” technique seen in the movies was pursued by Google too, even though their final results only approximate the face of a suspect instead of identifying him head on.

Still, it’s pretty impressive but not at all surprising, given the resources Google puts in image processing and machine learning.

Follow TechTheLead on Google News to get the news first.