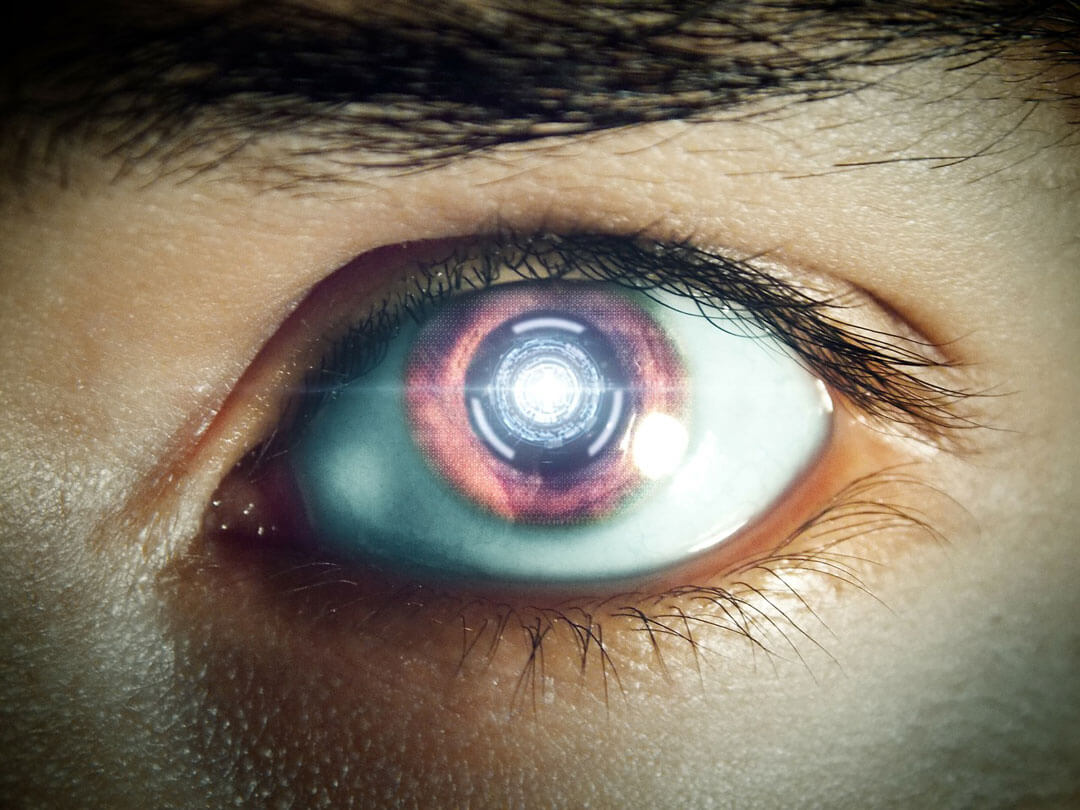

Google’s DeepMind artificial intelligence system is becoming eerily similar to a human. After defeating one in a game of Go, writing poems and songs like a teenager and lip-reading better than professionals, DeepMind is now capable of showing aggression and going after revenge when it’s about to lose to a fellow AI #machinemagic

Researchers discovered this inclination to win no matter the cost while testing machines’ willingness to cooperate with other. They used two games to see how AI learn and react. The first one, Gathering, asked two AI agents to gather as many virtual apples as they could. In the 40 million turns researchers watched, each time apples were scarce, the agents started fighting each other. They used laser beams to remove the other player from the game and gather the biggest number of apples.

When “less intelligent” AIs were put to the same test, they didn’t use laser beams but didn’t win either, since both gathered the same amount of fruit. So, only complex models of DeepMind “felt” the need to bring the big guns in, out of greed.

In the second game, Wolfpack, three AIs were put to the test. Two played as wolves, the third as prey. As long as both wolves were near the prey at the moment of capture, they received a reward. It didn’t matter who caught the prey as long as they worked together. In this instance, the AIs learned how to co-operate.

All in all, it’s clear that Google’s AI reached a point in the first game where its own interest trumped any other. The question is: would a more advanced robot make the same choice when faced with a human?

Follow TechTheLead on Google News to get the news first.

Andrew W

February 21, 2017 at 9:22 pm

Very interesting… The beginnings of Skynet? 😉

Also, it’s “prey” – unless you’re teaching the AI about God?