In the last years, deep learning has been a topic of discussion in many fields of the tech industry. The fact that machines can learn and adapt in a nano-second is amazing in and of itself. But the fact that you could adapt this technology in other fields in the future is the cherry on top.

So what is deep learning?

In essence, deep learning is another facet of machine learning, in the field of artificial intelligence, with the caveat that it can learn unsupervised from the data provided, data that can be unstructured or unlabeled.

This data can be processed and arranged to be funneled back to the CPU or GPU, by case, for a better outcome. In simple words: it makes the data less cumbersome to interpret, even with a minimal subset of information.

Sounds amazing! How does this apply to gaming?

Glad you asked!

So, NVIDIA has been fiddling with machine learning and deep learning for a while now. And the result was the first iteration of DLSS 1.0, the world’s best TAA implementation.

What is TAA? It’s that tab that you tick in the menu of games so your frame rate doesn’t go ballistic, nor objects go wild on screen. It basically makes everything move smoothly.

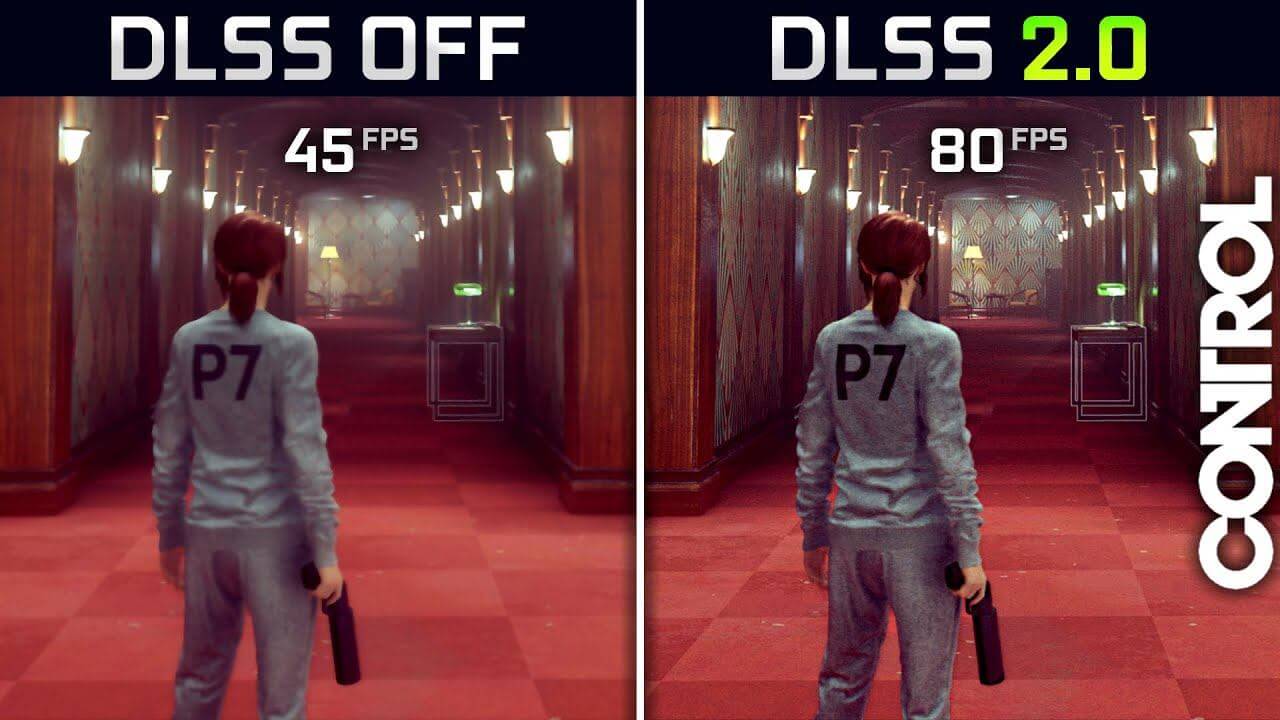

Combined with the new DLSS 2.0 from NVIDIA, this makes the implementation a big leap in AI rendering. Much more than its predecessor, the tech provides a huge performance boost, from 20% to 120% in some cases, as shown by the new NVIDIA post.

How is this “magic” possible?

It’s actually pretty simple! The magic words, in this case, are upscale graphics. For example, you run the game at a lower internal resolution, use DLSS 2.0, and upscale the picture to resemble a better version of itself.

And speaking of magic, it does this by excluding nasty problems like ghosting, smeared graphics, jitters or shimmering, the result being a crisp, shiny, and smooth picture. TAA works by converting the spatial averaging of supersampling into a temporal average so each frame in TAA only renders 1 sample per pixel. The result is then saved and the next frame is rendered with a new different jitter.

DLSS 1.0 vs DLSS 2.0: know the basics

DLSS 1.0, for a first try, was a really nice shot at deep learning, but it kinda blindfolded the whole process. DLSS 1.0 upscaled every frame by using deep learning to resolve anti-aliasing. That may have worked, but it had the small drawback that you had to train it for every game and the power cost and performance were pretty high. So they kinda said it was good, but let the developers figure it out.

DLSS 2.0 does a 180 in its approach; instead of putting deep learning to babysit anti-aliasing, it uses the TAA framework to resolve the anti-aliasing problem, and the deep learning to resolve the TAA history problem. Switching around what the framework has to do and who gets what! With amazing results, if we may say!

Some assembly may be required

The good news is that it doesn’t require you to train it. The bad news is that it’s all up to the developers. It does require some work from the game developer to implement, work that can be tedious or not, if the game has already TAA implemented.

Because of its AI architecture and fixed per-frame cost, its advantages are limited at higher FPS and being more useful at higher resolutions. However, in the case of low FPS, the performance improvement can be enormous. A good example is Wolfenstein, which gets a boost from 34 FPS to 68 on a GTX 2060 running in 4K with RTX on.

Interesting implications for developers and manufacturers

With this technology, we can already see how a new generation of Nintendo consoles can or will shape up. Using DLSS 2.0, companies can upscale and improve some aspects of the console experience, and this can be a game-changer, if it’s implemented.

Imagine a handheld console with a base resolution of 1080p, but with a screen output of 4K or maybe 8K. All this with no graphical artifacts or aberrations to speak of. AMD may have a new thorn in its back with this “next big thing”, but it’s all up to the developers.

Like one superhero said: “The power is yours!”

The ball is in the court of the developers right now. They have to make a decision about DLSS 2.0. Will they or won’t they implement this tech in future installments? Will they update games that have DLSS 1.0 to 2.0? Maybe this has some drawbacks, like a long cycle of development.

There are some uncertainties at the moment and we need a few concrete examples to base our facts on. Right now, we have the NVIDIA statements and tons of fanboys on the internet that want this to become a thing. If it will… DLSS 2.0 may prove to be a real game-changer, a powerhouse that lets you improve the performance of your game in new ways. Let’s see how this new tech shapes up!

Let us know your opinion about this technology! How would you use it if you had the know-how and what is the area of gaming that would benefit the most for it? Sound off in the comment section below. All are welcomed.

Follow TechTheLead on Google News to get the news first.