Robots in factories and warehouses are normally pre-programmed to handle and manipulate certain types of objects and that is how they perform their jobs, repetitively, always ready to grab or hold the same thing, over and over. When a different type of object is thrown into the mix though, that’s when things become frustrating.

Engineers can only go one way or the other: program them to grasp onto task-specific items or to just grasp various objects that are different in shape and size, in turn sacrificing their ability to perform more complicated tasks instead.

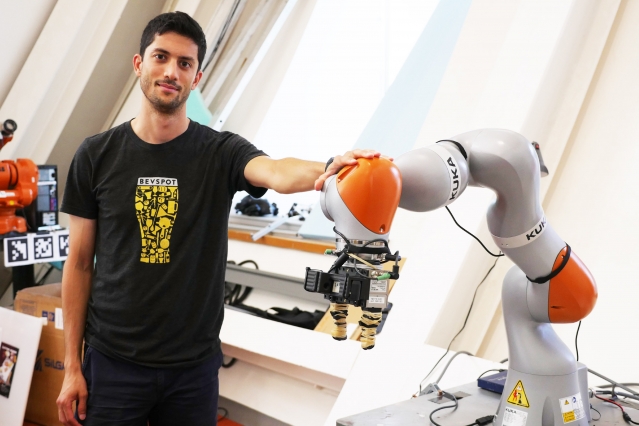

In a new paper, researchers from the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL), talk about a system they have developed that allows robots to inspect various objects and gather a visual understanding of the items, enough to manipulate them without having seen them before.

Credit: MITCSAIL / YouTube

The system, called Dense Object Nets (DON), allows the robot to pick up specific items from a pile of either different or similar items.

“Many approaches to manipulation can’t identify specific parts of an object across the many orientations that object may encounter,” says PhD student Lucas Manuelli, “For example, existing algorithms would be unable to grasp a mug by its handle, especially if the mug could be in multiple orientations, like upright, or on its side.”

The team trained DON to view objects in a series of points, map them together and create a 3D image of the object, which then allows it to grasp onto the object by a specified point.

The team wants the DON system to be helpful not only in factories or warehouses but also within the home – it can prove useful in cleaning or putting various items away while you are not at home, for example.

This new approach, where a neural net teaches itself what an object is might just be the answer the researchers working on computer vision have been striving for.

The team will present their paper at the Conference on Robot Learning in Zürich, next month.

Follow TechTheLead on Google News to get the news first.