Big tech companies have started to lean in an ear and listen to the demands and suggestions of people with various disabilities and investing more time and paying more attention to accessibility.

While not all disabilities are covered by tech companies, we are, slowly but surely, heading towards a world where everyone will be able to communicate and work around their devices easier than ever before.

Google is currently training an AI to understand people with speech impairments and The U.K-based Special Effect charity works with companies like Microsoft to create customized controls for people with disabilities. Some of you might also remember the Nadia chatbot we talked about back in 2017.

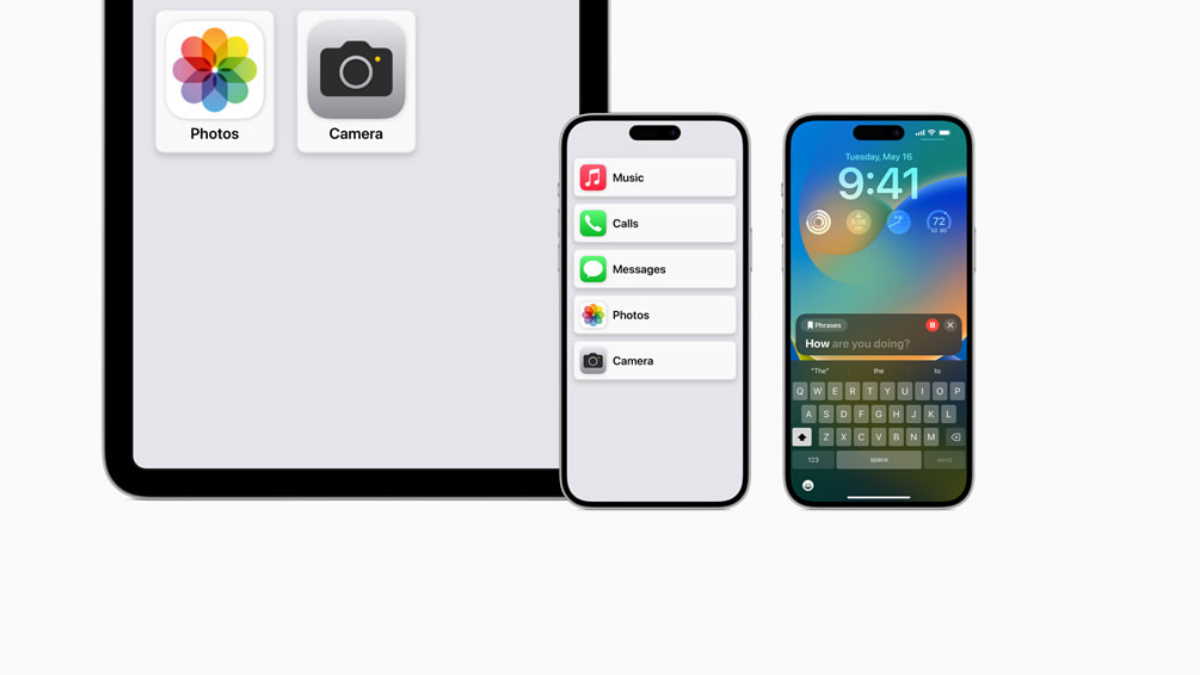

And now, Apple too has revealed its latest addition to the accessibility market: Voice Control.

With Apple’s macOS Catalina and iOS13 the users can now use voice control to launch apps, select emojis or simulate various actions that you only used to be able to do with swipes or gestures. To achieve all that, Apple attached a number to every UI item that the users can access by saying “show numbers”.

All they have to do afterwards is speak the number or add another command.

If the users don’t have a vocal ability, they can still select the items they need via a dial or a blow tube.

For those of you who are worried about privacy, you can rest easy: according to Apple, your voice will only be processed on the device and not a peep of yours will be sent to Apple or be stored by the company. OH yes – all of it works offline too!

Voice Control is supported by all of Apple’s native apps but also third party ones that use the Apple accessibility API.

The new feature was not covered in a lot of detail during the keynote but the next macOS and iOS launches are scheduled for this fall so we’ll most likely hear more about it then.

Follow TechTheLead on Google News to get the news first.