The term ‘deepfake’ was coined in 2017 and it denotes a technique where artificial intelligence combines and superimposes existing images and videos onto other images and videos (usually of people’s faces) by using a machine learning technique called a generative adversarial network (GEN).

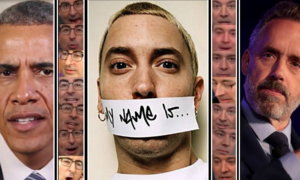

Deepfakes were first introduced to the general public via a video that showed former U.S President Barack Obama saying words that belonged to a completely separate audio track – most of you might be familiar with the clip as it made a quite few headlines back then and paved the way for the deepfakes of today.

A good number of the very first deepfakes originated from a Reddit community called r/deepfakes where the users shared the content they created. Most of the content was purely made for fun: some of it included, for example, videos of Nicholas Cage’s face superimposed onto actors from other movies.

However, as expected, pornographic deepfakes started to make an appearance: many of them involved the faces of A-list celebrities being swapped onto the actresses in adult videos. Reddit though was quick to ban the r/deepfake thread for sharing ‘involuntary pornography’. Other social media platforms like Twitter and even pornography website Pornhub also soon followed suit.

At their core, most of the deepfakes out there are the result of people having fun and messing about: one of the latest examples of the tech hard at work is a video where Arnold Schwarzenegger’s Terminator is replaced with Sylvester Stallone.

The opportunity for deepfakes to change the movie industry is definitely there – the technology has evolved significantly since 2017 and will only continue to do so. Perhaps, in the future, you’ll even be able to star in a film yourself, just by uploading a few images and videos of yourself into a software.

If you considered a certain actor was not fit for the role, you could always just change their face with someone else’s. And who knows what we’ll be able to do with black and white movies with the help of deepfakes?

The possibilities are there.

Recently, Corridor Crew, a team of VFX artists, created a Tom Cruise deepfake with the help of an impersonator. The result? Uncanny valley at its finest.

While both the videos above are nothing but harmless fun and a way for VFX artists and VFX hobbyists to flex their muscles a little, the emergence of deepfakes poses a lot of questions about how we interact with it and what is at stake when the technology falls into the wrong hands.

Especially when we take a step back and understand that the technology is available in the shape of open source programs that can be downloaded by anyone with an internet connection.

It takes around a week for an average user to train an AI model on a mid-range graphics card and 8GB of VRAM, for example. Tech labs owned by the likes of NVIDIA or by research institutions like Stanford or Princeton on the other hand, only need a few hours.

So this is where things start to get dark: the deeper we dive into the technology’s abilities, the more we can see the potential for hoaxes to emerge. It’s too easy to put a politician’s face onto someone else’s and get them to say things they never would. The hoaxes, even if they’re intended just for fun, can have horrifying repercussions, be it at an individual level or a political one.

An additional dark side of deepfakes has also emerged in the shape of an app called DeepNude, which allows anyone to generate realistic-looking nude images of women. All they have to do is insert a photo of a woman wearing clothes into the app. It’s available for free on Windows but a premium version that delivers better resolution images costs $99.

While the image results feature a ‘fake’ watermark on them, the mark is easy to remove. The results are not extremely photorealistic for the free version but, at a lower resolution they still can be used as revenge porn in order to either shame or harass women.

You might (or might not) be surprised to find out there’s even forums where anyone can pay experts to create deepfakes of anyone they want, be they co-workers, friends or family.

While these services are not as readily accessible as DeepNude is, they still raise a lot of questions about the future of deepfakes and how they will further affect us in the long run – while the malicious deepfakes are not real, the consequences they will leave in their aftermath will be.

Follow TechTheLead on Google News to get the news first.