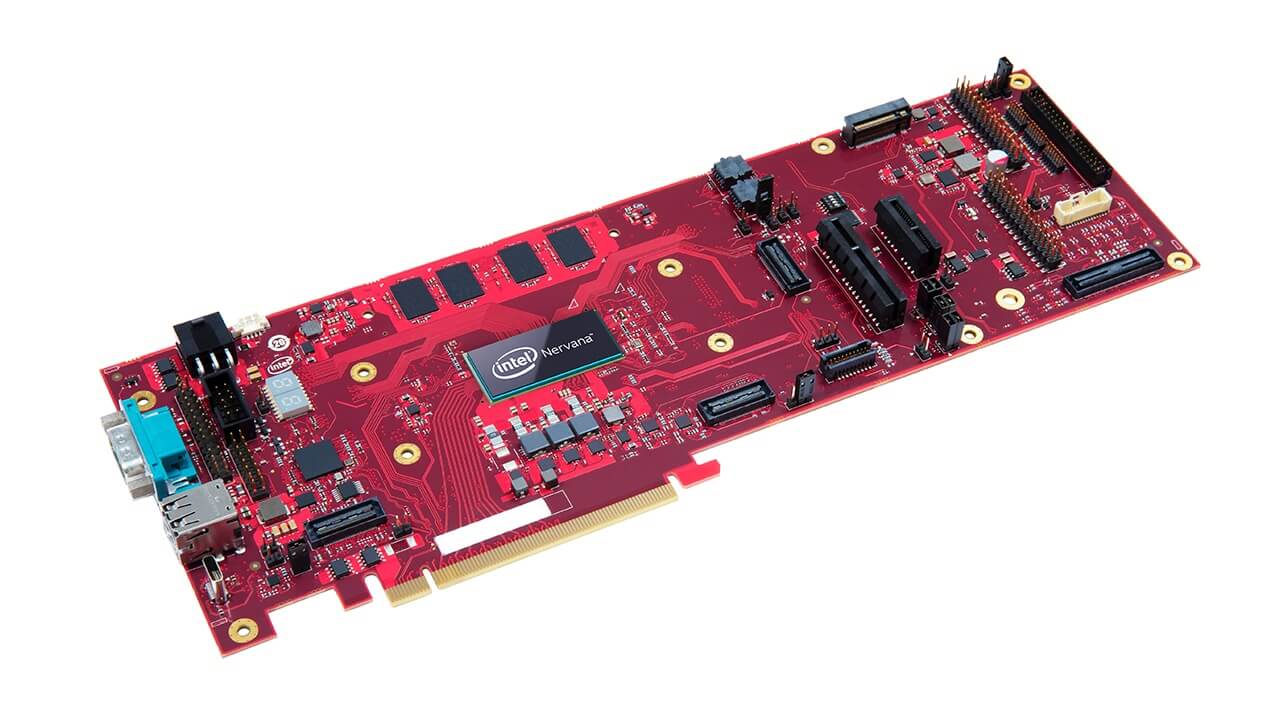

Intel introduced its first processor that uses artificial intelligence (AI), a chip designed for large computing centers and dubbed ‘Spring Hill’.

That’s definitely catchier than ‘Nervana Neural Network Processor for Inference’ or NNNP-I, as the chip is also known.

Spring Hill is based on a 10 nanometer Ice Lake processor that lets it handle high workloads using the least amount of energy, specs that already convinced Facebook to use it in their data centers:

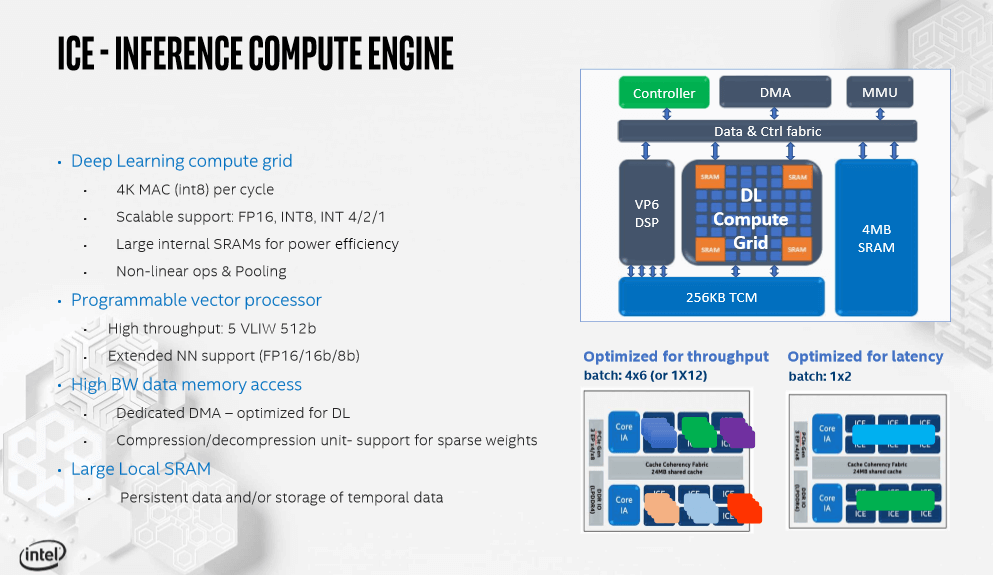

- Intel IA cores with AVX and VNNI

- 12x Inference Compute Engines (ICE)

- 4×32, 2×64 LPDDR4x

- Dynamic power management and FIVR technology

- 24MB LLC for fast Inter ICE and IA data sharing

- Hardware-based sync for ICE to ICE communication.

“In order to reach a future situation of ‘AI everywhere’, we have to deal with huge amounts of data generated and make sure organizations are equipped with what they need to make effective use of the data and process them where they are collected,” said Naveen Rao, general manager of Intel’s artificial intelligence products group.

Even though the company barely announced its first AI chip, according to a presentation, Intel is already planning or designing the next two Spring Hill generations.

Follow TechTheLead on Google News to get the news first.