We’ve all known someone who has lied a little bit on their Facebook or Instagram photos, whether it meant shedding come extra inches off the waist or covering a zit, we’ve seen it happen or have even done it ourselves now and then.

Photoshop was released back in 1990 and it has impacted the way we create images and also how we modify them. Image editing has become the norm nowadays, at least as far as our visual culture is concerned: we’re bombarded with images of near-perfect people at every step especially on social media platforms.

Most of that visual content also happens to be fake. While we acknowledge it’s happening and we pretty much know when to recognize it, our minds still register it as a reality.

Adobe realized that too and states that it now recognizes “the ethical implications” of the technology it has created.

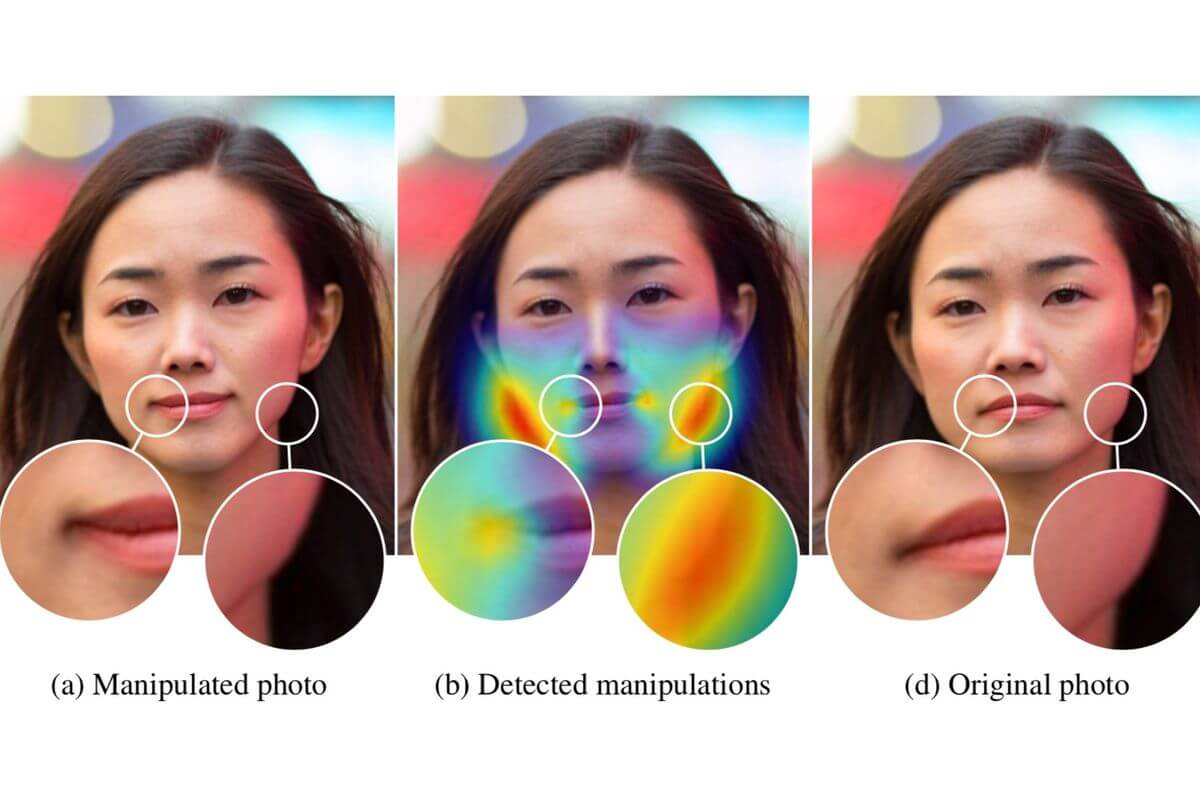

So Adobe researchers Richard Zhang and Oliver Wang, alongside colleagues from UC Berkeley, Sheng-Yu Wang, Dr. Andrew Owens and Professor Alexei A. Efros, created a method that can detect whether an image has been altered with the Photoshop Face Aware Liquify feature.

“[…] fake content is a serious and increasingly pressing issue. Adobe is firmly committed to finding the most useful and responsible ways to bring new technologies to life – continually exploring using new technologies, such as artificial intelligence (AI), to increase trust and authority in digital media.” Adobe said about why they decided to bring this project to life.

The team trained a Convolutional Neural Network (CNN) to recognize the altered faces by creating a training set of images that applied the Face Aware Liquify on thousands of pictures on the internet.

“The idea of a magic universal ‘undo’ button to revert image edits is still far from reality. But we live in a world where it’s becoming harder to trust the digital information we consume, and I look forward to further exploring this area of research.”

Richard Zhang

A subset of the photos was then chosen at random for training and an artist was also hired to alter some of the images in the data set. Human creativity was a necessity for training the network because it widened the range of techniques used to alter images beyond the ones created just by algorithms.

“We started by showing image pairs (an original and an alteration) to people who knew that one of the faces was altered,” Wang said “For this approach to be useful, it should be able to perform significantly better than the human eye at identifying edited faces.”

The humans managed to identify the altered faces 53% of the time, which is really not bad at all, but the neural network identified them correctly 99% of the time. On occasion, the tool even reverted the altered images to their original state.

“It might sound impossible because there are so many variations of facial geometry possible,” Professor Efros said “But, in this case, because deep learning can look at a combination of low-level image data, such as warping artifacts, as well as higher level cues such as layout, it seems to work.”

It’s worth mentioning that the tool is the first of its kind designed for this particular purpose.vIt won’t stop the side effects of manipulated visual imagery but hey, at least someone is making an effort.

Follow TechTheLead on Google News to get the news first.